Psychoacoustics sounds like an abstract scientific concept, but it comes into play whenever you listen to music.

Listening to a track seems simple enough, but the way you experience your own songs and mixes is far from objective.

The systems in your brain and sensory organs that turn vibrating air patterns into music are full of strange quirks.

Knowing the basics of how they work can help you deal with some of the most fundamental processes in mixing.

In this article I’ll explain the basics of psychoacoustics and show why it’s relevant to your music.

What is psychoacoustics?

Psychoacoustics is the science of how humans perceive and understand sound. It includes the study of the mechanisms in our bodies that interpret sound waves as well as the processes that occur in our brains when we listen.

That may sound completely academic, but some psychoacoustic phenomena have a big impact when it comes to music production—especially mixing and mastering.

I’ll go through the most important psychoacoustic concepts for producers, explain why they matter, and how understanding them can help you make better music.

Music perception and cognition

Psychoacoustics is divided into two main areas—perception and cognition.

Perception deals with the human auditory system and cognition focuses on what happens in the brain.

The two systems are tightly linked and influence each other in many ways.

Let’s start with perception.

Human hearing range

Your experience of sound and music would be completely different if your senses worked like audio measuring equipment.

Your experience of sound and music would be completely different if your senses worked like audio measuring equipment.

For starters, your body’s auditory system can only process sound waves within a certain range of frequencies.

That range spans from 20 Hz to 20 kHz

Frequencies below 20 Hz cease to seem like a unified tone and become more like a series of pulses. Frequencies above 20 kHz disappear entirely—if you’re lucky enough to get that far.

20 kHz is the upper limit for the most sensitive human listeners. Most adults drop off much earlier at around 16 or 17 kHz.

That range may seem wide, but there’s plenty of audio activity that occurs at frequencies we’ll never experience.

For example, the echolocation systems of bats and dolphins can detect frequencies of over 100 kHz—imagine what that sounds like!

Equal loudness contours

Even within the 20Hz-20kHz range, your hearing isn’t a tidy linear system.

Some frequencies seem more intense than others—even if they’re exactly the same decibel level.

The reason why has to do with the structure of your inner ear.

After a sound enters your ear, it travels through an organ called the cochlea that looks a bit like a rolled up garden hose.

The sound waves excite small hair cells called stereocilia that line the cochlea’s interior.

These cells send the electrical messages your brain interprets as sound and music. But the hair cells themselves aren’t evenly distributed throughout the cochlea.

They’re clustered up in the areas that help us process the most common sounds in our environment. For example, critical ranges like the upper midrange where human speech occurs seem naturally louder to us.

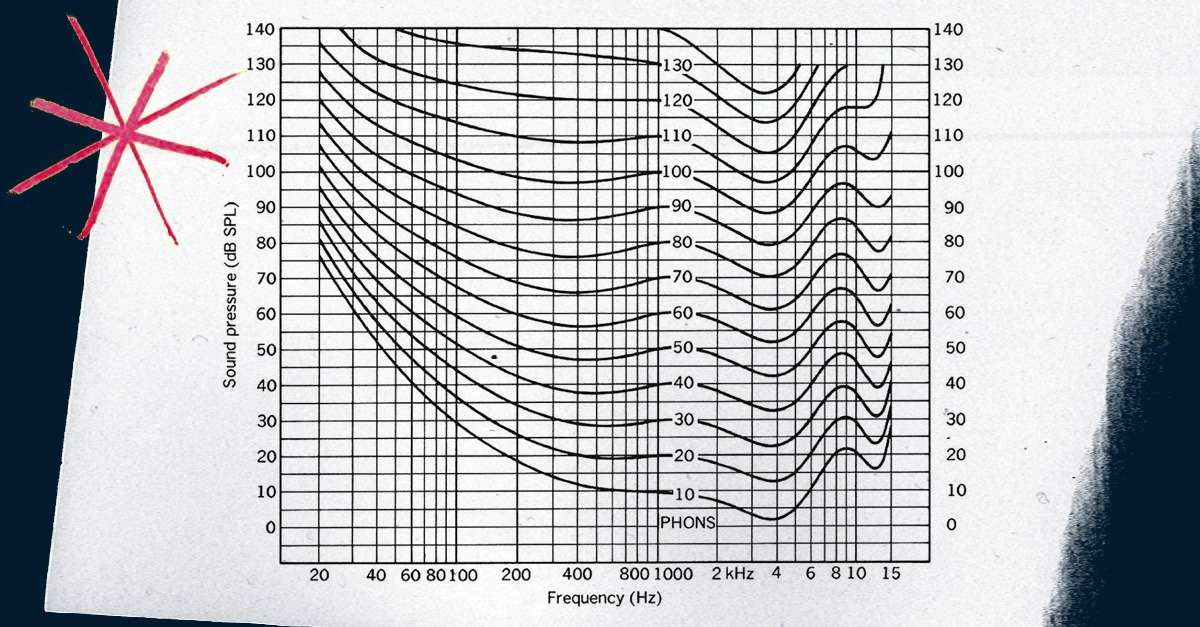

To try to make sense of these variations, researchers created graphs to explain how frequency and SPL are related in our perception.

These charts are called equal loudness contours, or sometimes Fletcher-Munson curves after the first researchers to work on them.

Every point along one curve on the graph will seem like the same loudness.

You can easily see follow the trend along the line to see which parts of the spectrum get emphasized by your perception system.

In mixing, this explains why pushing the upper midrange frequencies only a few dB louder with your EQ plugins can make such a drastic difference.

To learn more about the role of equal loudness contours in mixing, check out our article on Fletcher Munson curves.

Auditory masking

Even with a single sound source, your perception system has a big effect.

But start layering sounds together and it gets even more complicated.

When multiple sounds are played at the same time, a psychoacoustic phenomena called masking comes into play.

Masking explains why it’s so hard to clearly hear the timbre of two sounds with overlapping frequencies.

Masking explains why it’s so hard to clearly hear the timbre of two sounds with overlapping frequencies.

Each sound is unique in the air. But in your inner ear, they excite the same regions of your hair cells if they reach the cochlea at the same time.

If they’re close enough, you can’t tell them apart.

Your auditory nerve needs a minimum frequency difference between two sounds to process them individually.

Masking is one of the main reasons equalization is used so often in mixing. Most of the signals you’ll be adding together at your master bus have frequency energy in common areas.

To EQ a track well you have to cut frequencies that don’t contribute to the role of a sound in your mix, while emphasizing the ones that matter.

Sound localization

If that weren’t enough, your auditory system also affects how you locate sounds around you in space.

The process is called sound localization and it helps you situate the sources of different sounds in your environment.

Sound localization relies on several different factors to help you determine where a sound is coming from.

The first is the physical distance between your ears themselves.

Since human ears are located on either side of the head, sound from different directions reaches the left and right ear at slightly different times and intensities.

Your brain uses those differences, along with other cues from the tone and timbre to determine information about the origin.

This process is what makes it possible to use panning in your mix to create a nice, wide stereo image.

Cognition

Music cognition gets a whole lot more complicated. Your brain has powerful pattern recognition systems that help you interact with the language of music.

Why does a 2:1 frequency ratio produce an octave? Answering some of these fundamental questions would take an advanced university degree, so I won’t get into it here.

Why does a 2:1 frequency ratio produce an octave?

Even so, there are some simple principles in music cognition to know to help you produce music better

For example, cognitive psychoacoustics show up in situations where a listener’s internal bias affects how they evaluate different elements in the production process.

I’m talking about situations like choosing between cheap or expensive gear, or different audio file formats.

For some controversial equipment like boutique cables, even accomplished mix engineers can’t tell the sonic difference between cheap and expensive in blind scientific tests.

However, when the participants are told which is which, many people choose subconsciously according to their internal bias.

Getting over your biases in mixing is difficult, but it’s one of the most important parts of becoming a good producer.

Here’s an article that tackles a common bias in plugin design called skeuomorphism.

Sonic experience

It’s clear that your body and your brain work together to create your experience of music.

These puzzling fundamental questions aren’t essential to your everyday life as a musician, but they can help you be more informed about the work you do.

Now that you know some of the basic concepts in psychoacoustics, get back to your mix and see how they apply.